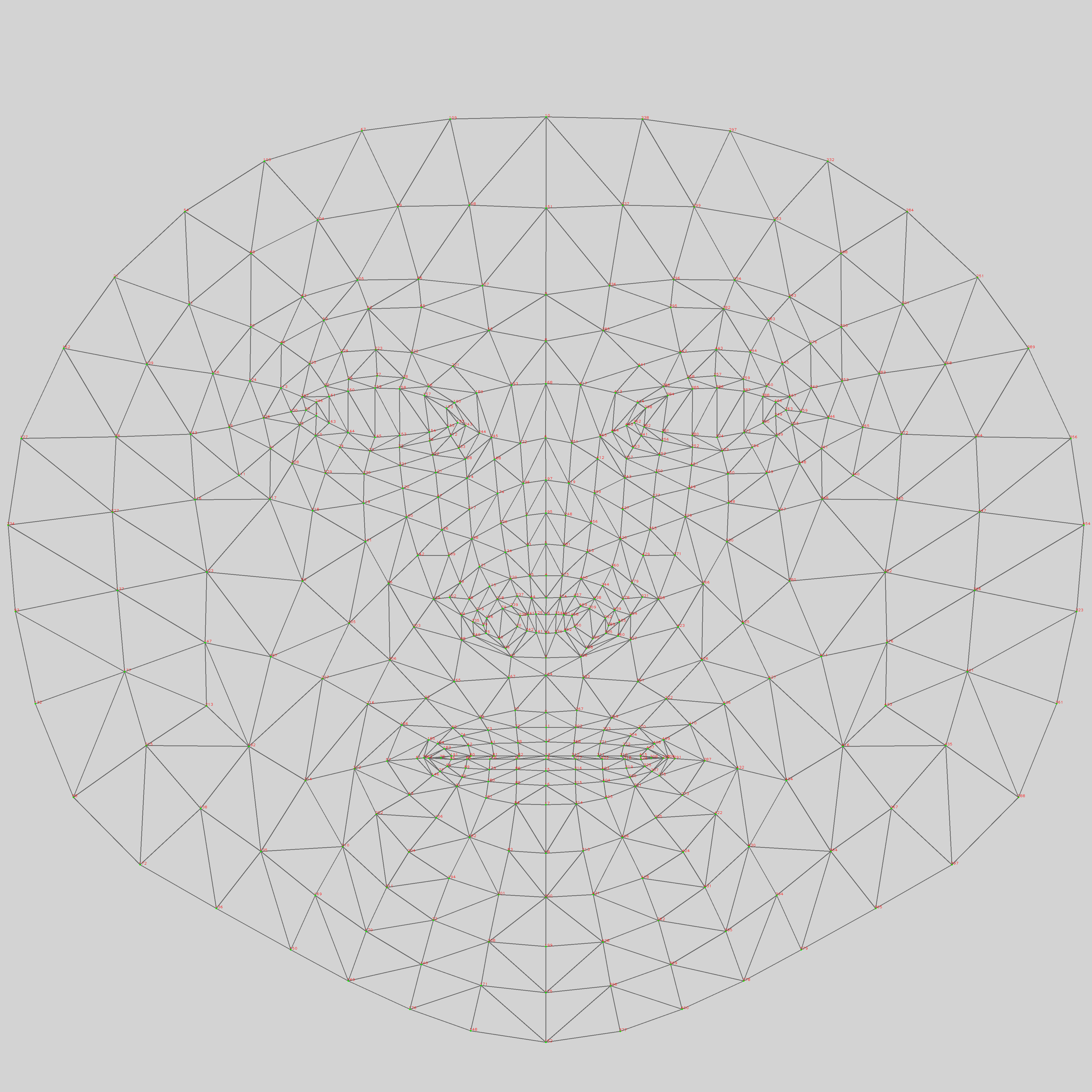

This is the continuation of the last post with slight modifications. Instead of just displaying the face mesh details in a Script TOP, it tries to visualise all the face mesh points in a 3D space. As the facial landmarks returned from the MediaPipe contain three dimensional information, it is possible to enumerate all the points and display them in a Script SOP. We are going to use the appendPoint() function to generate the point cloud and the appendPoly() function to create the face mesh.

The data returned from the MediaPipe contains the 468 facial landmarks, based on the Canonical Face Model. The face mesh information (triangles), however, is not available from the results obtained from the MediaPipe solutions. Nevertheless, we can obtain such information from the meta data of the facial landmarks from its GitHub. To simplify the process, I have edited the data into this CSV mesh file. It is expected that the mesh.csv file is located in the TouchDesigner project folder, together with the TOE project file. Here are the first few lines of the mesh.csv file,

173,155,133 246,33,7 382,398,362 263,466,249 308,415,324

Each line is the data for a triangular mesh of the face. The 3 numbers are the indices of the vertices defined in the 468 facial landmarks. The visualisation of the landmarks is also available in the MediaPipe GitHub.

The TouchDesigner project will render the Script SOP with the standard Geometry, Camera, Light and the Render TOP.

I’ll not go through all the code here. The following paragraphs cover some of the essential elements in the Python code. The first one is the initialisation of the face mesh information from the mesh.csv file.

triangles = []

mesh_file = project.folder + "/mesh.csv"

mf = open(mesh_file, "r")

mesh_list = mf.read().split('\n')

for m in mesh_list:

temp = m.split(',')

x = temp[0]

y = temp[1]

z = temp[2]

triangles.append([x, y, z])

The variable triangles is the list of all triangles from the canonical face model. Each entry is a list of 3 indices to the entries of the corresponding points in the 468 facial landmarks. The second one is the code to generate the face point cloud and the mesh.

for pt in landmarks:

p = scriptOp.appendPoint()

p.x = pt.x

p.y = pt.y

p.z = pt.z

for poly in triangles:

pp = scriptOp.appendPoly(3, closed=True, addPoints=False)

pp[0].point = scriptOp.points[poly[0]]

pp[1].point = scriptOp.points[poly[1]]

pp[2].point = scriptOp.points[poly[2]]

The first for loop creates all the points from the facial landmarks using the appendPoint() function. The second for loop creates all the triangular meshes from information stored in the variable triangles using the appendPoly() function.

After we draw the 3D face model, we also compute the normals of the model by using another Attribute Create SOP.

The final TouchDesigner project is available in the MediaPipeFaceMeshSOP repository.