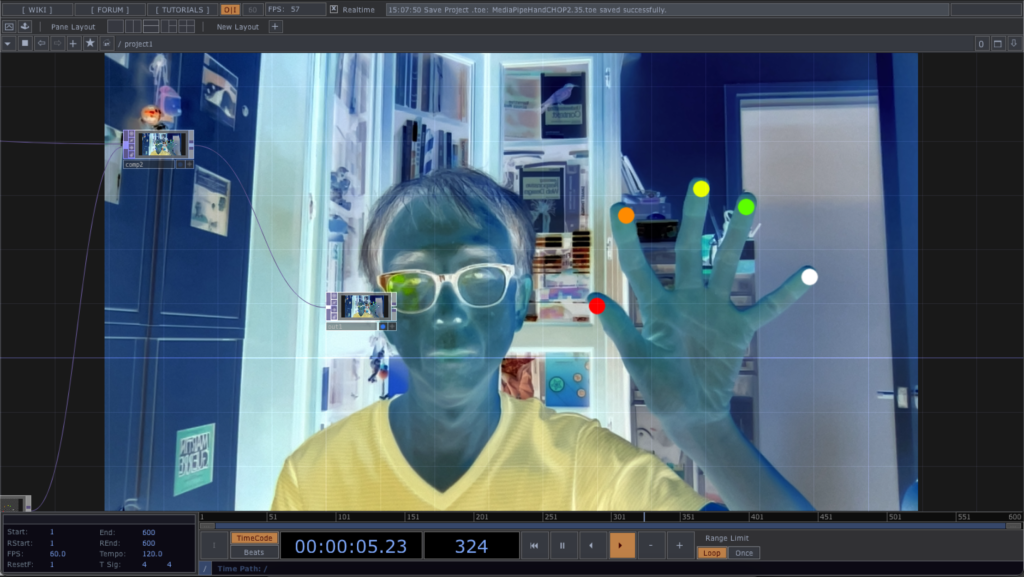

The following example presents a more general approach to obtain the hand tracking details in a Script CHOP. We can then use other TouchDesigner CHOPs to extract the data for visualisation.

For simplicity, it also detects one single hand. For each hand tracked, it will generate 21 landmarks as shown in the diagram from the last post. The Script CHOP will produce 2 channels, hand:x and hand:y. Each of the channel will have 21 samples, corresponding to the 21 hand landmarks from MediaPipe. The following code segment describes how it is done.

detail_x = []

detail_y = []

if results.multi_hand_landmarks:

for hand in results.multi_hand_landmarks:

for pt in hand.landmark:

detail_x.append(pt.x)

detail_y.append(pt.y)

tx = scriptOp.appendChan('hand:x')

ty = scriptOp.appendChan('hand:y')

tx.vals = detail_x

ty.vals = detail_y

scriptOp.numSamples = len(detail_x)

scriptOp.rate = me.time.rate

The TouchDesigner project also uses Shuffle CHOP to swap the 21 samples into 21 channels. We can then select the 5 channels corresponding to the 5 finger tips (4, 8, 12, 16, 20) for visualisation. The final project is available for download in the MediaPipeHandCHOP2 folder of the GitHub repository.