This is the new book I published in 2017 by Apress, Springer to introduce the use of OpenCV in Processing with a custom library I developed.

Check out more details at the Apress website.

This is the new book I published in 2017 by Apress, Springer to introduce the use of OpenCV in Processing with a custom library I developed.

Check out more details at the Apress website.

This is the book I wrote for the Packt Publishing in 2013. It is an introductory book on multimedia production with the GEM library in the Pure Data graphical programming language.

05/07/2014 – The library is renamed again to Kinect4WinSDK in order not to use the prefix P or P5. It has been built in Windows 7, Kinect for Windows SDK 1.8, Java JRE 1.7u60 and Processing 2.2.1.

05/04/2014 – The library is renamed to P5Kinect according to suggestion from the Processing community, in order not to mix up with official Processing class.

28/03/2014 – The library is updated for the use of Kinect for Windows SDK 1.8, Java JRE 1.7u51 and Processing 2.1.1.

The Kinect for Processing library is a Java wrapper of the Kinect for Windows SDK. And it of course, runs in Windows platform. At this moment, I have only tested in Windows 7. The following 4 functions are implemented. All images at this moment are 640 x 480.

GetImage() returns a 640 x 480 ARGB PImage.

GetDepth() returns a 640 x 480 ARGB PImage. The image is, however, grey scale only. It resolution is also reduced from the original 13 bits to 8 bits for compatibility with the 256 grey scale image.

GetMask() returns a 640 x 480 ARGB PImage. The image is transparent in the background using the alpha channel. Only those areas with players are opaque with the aligned RGB images of the players.

Skeleton tracking is a bit complicated. The library will expect 3 event handlers in your Processing sketch. Each event handler uses one or two arguments of type SkeletonData (to be explained later). Each SkeletonData represents a human figure that appears, disappears or moves in front of the Kinect camera.

appearEvent – it is triggered whenever a new figure appears in front of the Kinect camera. The SkeletonData keeps the id and position information of the new figure.

disappearEvent – it is triggered whenever a tracked figure disappears from the screen. The SkeletonData keeps the id and position information of the left figure.

moveEvent – it is triggered whenever a tracked figure stays within the screen and may move around. The first SkeletonData keeps the old position information and the second SkeletonData maintains the new position information of the moving figure.

Please note that a new figure may not represent a real new human player. An existing player goes off screen and comes back may be considered as new.

The SkeletonData class is a subset of the NUI_SKELETON_DATA structure. It implements the following public fields:

public int trackingState; public int dwTrackingID; public PVector position; public PVector[] skeletonPositions; public int[] skeletonPositionTrackingState;

import kinect4WinSDK.Kinect; import kinect4WinSDK.SkeletonData; Kinect kinect; ArrayListbodies; void setup() { size(640, 480); background(0); kinect = new Kinect(this); smooth(); bodies = new ArrayList (); } void draw() { background(0); image(kinect.GetImage(), 320, 0, 320, 240); image(kinect.GetDepth(), 320, 240, 320, 240); image(kinect.GetMask(), 0, 240, 320, 240); for (int i=0; i =0; i--) { if (_s.dwTrackingID == bodies.get(i).dwTrackingID) { bodies.remove(i); } } } } void moveEvent(SkeletonData _b, SkeletonData _a) { if (_a.trackingState == Kinect.NUI_SKELETON_NOT_TRACKED) { return; } synchronized(bodies) { for (int i=bodies.size ()-1; i>=0; i--) { if (_b.dwTrackingID == bodies.get(i).dwTrackingID) { bodies.get(i).copy(_a); break; } } } }

It is a simple smile detection library for the open source programming environment – Processing.

Download the sample application with the library in code folder.

This is my second Processing library to implement a simple interface to the ARToolKit using the JARToolKit (obsolete).

Download the SimpleARToolKit library here.

This is the first library I write for Processing. It is, however, obsolete as the OpenCV library has already included the face detection feature.

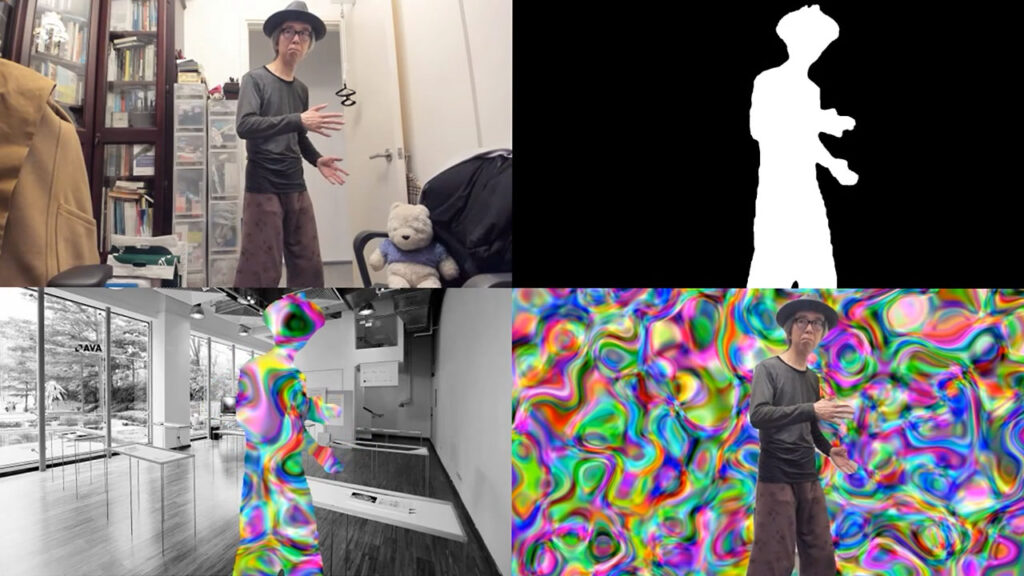

The tutorial is an updated version of the MediaPipe Pose using the new segmentation mask function to identify the human body tracked. It used the Script TOP to generate the mask image. Users can further enhance the image with the Threshold TOP for display purpose. Similar to the previous tutorials, it assumes the installation of Python 3.7 and the MediaPipe library through Pip.

The source TouchDesigner project file is available in my TouchDesigner GitHub repository. The Python code is relatively straighforward. The pose tracking results will include an array (segmentation_mask) of the size of the tracked image. Each pixel will have a value between 0.0 to 1.0. Darker value will be the background while brighter value will likely be the tracked body. Here is the full listing.

# me - this DAT

# scriptOp - the OP which is cooking

import numpy as np

import cv2

import mediapipe as mp

mp_drawing = mp.solutions.drawing_utils

mp_pose = mp.solutions.pose

pose = mp_pose.Pose(

min_detection_confidence=0.5,

min_tracking_confidence=0.5,

enable_segmentation=True

)

def onSetupParameters(scriptOp):

return

# called whenever custom pulse parameter is pushed

def onPulse(par):

return

def onCook(scriptOp):

input = scriptOp.inputs[0].numpyArray(delayed=True)

if input is not None:

image = cv2.cvtColor(input, cv2.COLOR_RGBA2RGB)

image *= 255

image = image.astype('uint8')

results = pose.process(image)

if results.segmentation_mask is not None:

rgb = cv2.cvtColor(results.segmentation_mask, cv2.COLOR_GRAY2RGB)

rgb = rgb * 255

rgb = rgb.astype(np.uint8)

scriptOp.copyNumpyArray(rgb)

else:

black = np.zeros(image.shape, dtype=np.uint8)

scriptOp.copyNumpyArray(black)

return

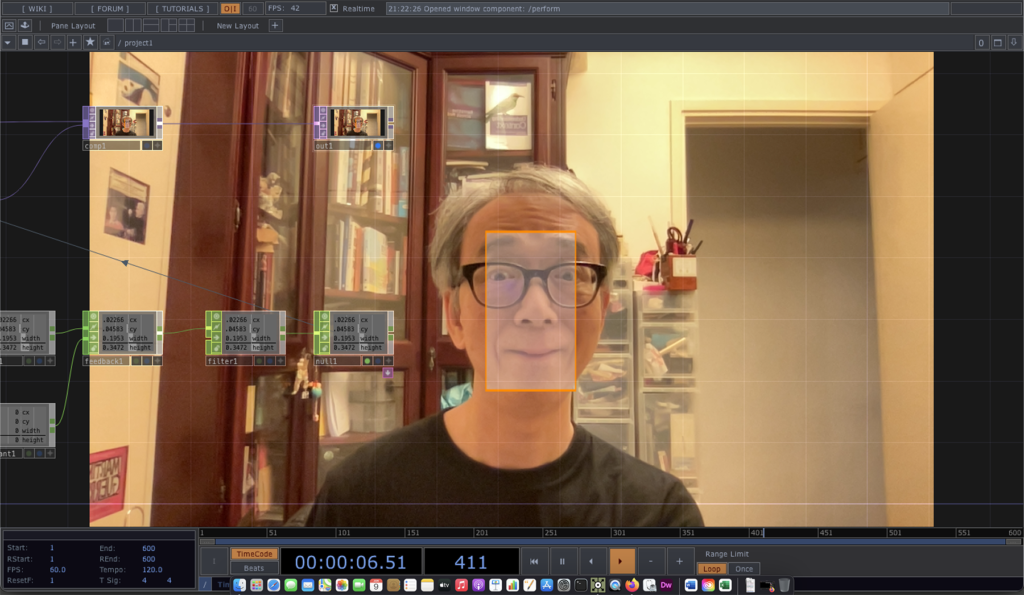

The example will continue to use a Script CHOP, Python and TouchDesigner for a face detection function. Instead of using the MediaPipe library, it will use the Dlib Python binding. It refers to the face detector example program from the Dlib distribution. Dlib is a popular C++ based programming toolkit for various applications. Its image processing library contains a number of face detection functions. Python binding is also available.

The main face detection capability is defined in the following statements.

import dlib detector = dlib.get_frontal_face_detector() rects = detector(image, 0)

The Script CHOP will generate the following channels

for the largest face it detected from the live image.

The complete project is available in the FaceDetectionDlib1 GitHub folder.